How Edge AI Chips Power Real-Time Analytics

Edge AI for real-time analytics means a device can process data on its own, right where the data appears. It does not need to send everything to the cloud and wait for a response. This matters when time is important. A camera that detects a person, a robot that avoids a wall, or a machine that notices a fault must react right away. In all of these cases, the chip inside the device does the main work.

In simple words, the chip takes data such as video, sensor signals, or sound, runs an AI model, and gives a result immediately. That result might be object detection, anomaly detection, speech recognition, or image classification. This is why edge AI hardware matters so much. If the chip is too weak, too slow, or too power hungry, the whole system becomes harder to use in the real world.

A few years ago, many edge systems relied mostly on CPUs and sometimes GPUs. Today that is not enough for many real-time workloads. Modern edge AI devices often use NPUs, dedicated AI accelerators, or hybrid chips that combine CPU, GPU, and AI processing blocks. This makes local inference faster and more efficient.

What Makes a Good Edge AI Chip

A good edge AI chip must do more than show a nice number on paper. It has to process data fast enough for real-time tasks, but it also has to stay within power and thermal limits. Many edge devices are small. Some are fanless. Some run all day in factories, stores, vehicles, or outdoor systems. In these cases, efficiency matters just as much as raw performance.

Another important point is software support. A powerful chip is less useful if deployment is difficult or if model support is limited. Memory bandwidth also matters. Some chips can run small models well, but struggle when video resolution increases or when several streams must be processed at the same time.

This is why there is no single best chip for every project. Some chips are better for smart cameras. Some are better for robotics. Some work well in low-power devices. Others are built for high-end AI workloads that need more compute and more flexibility.

Table of Contents

- How Edge AI Chips Power Real-Time Analytics

- What Makes a Good Edge AI Chip

- The Most Relevant Edge AI Chips for Real-Time Analytics

- Comparison of Edge AI Chips for Real-Time Analytics

- Why RK3588 Still Matters in This Market

- Why Modular AI Is Growing

- The Real Trade-Offs

- Conclusion

- FAQ

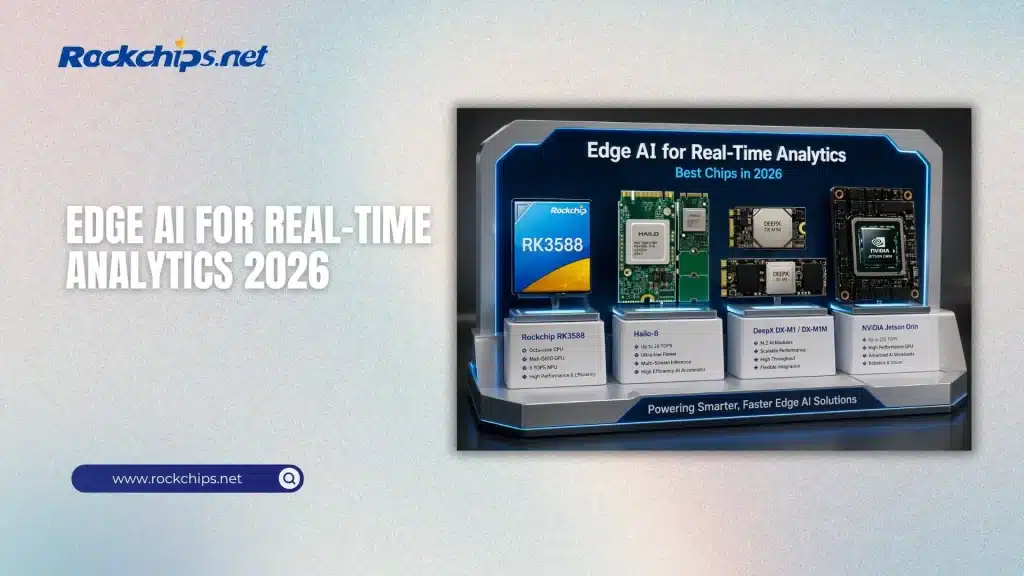

The Most Relevant Edge AI Chips for Real-Time Analytics

Rockchip RK3588 and Next-Gen RK3688

Rockchip RK3588 is one of the most practical chips in this space. It is widely used in an RK3588 SBC or RK3588 single-board computer, where developers want a full system that can handle multimedia, general computing, and AI inference in one platform. The chip includes an octa-core CPU, a Mali-G610 GPU, and a built-in NPU rated at around 6 TOPS. That does not make it the strongest AI chip on the market, but it makes it a very usable one. It is strong enough for object detection, tracking, video analytics, and many AIoT tasks, while still staying in a reasonable power range. For developers watching the next Rockchip generation, the RK3688 vs RK3668 next-generation chip comparison gives useful context on how newer Rockchip designs are moving forward. For projects where size, power efficiency, and cost are even more critical than raw TOPS, the compact vision-focused Rockchip RV1126B remains a practical choice.

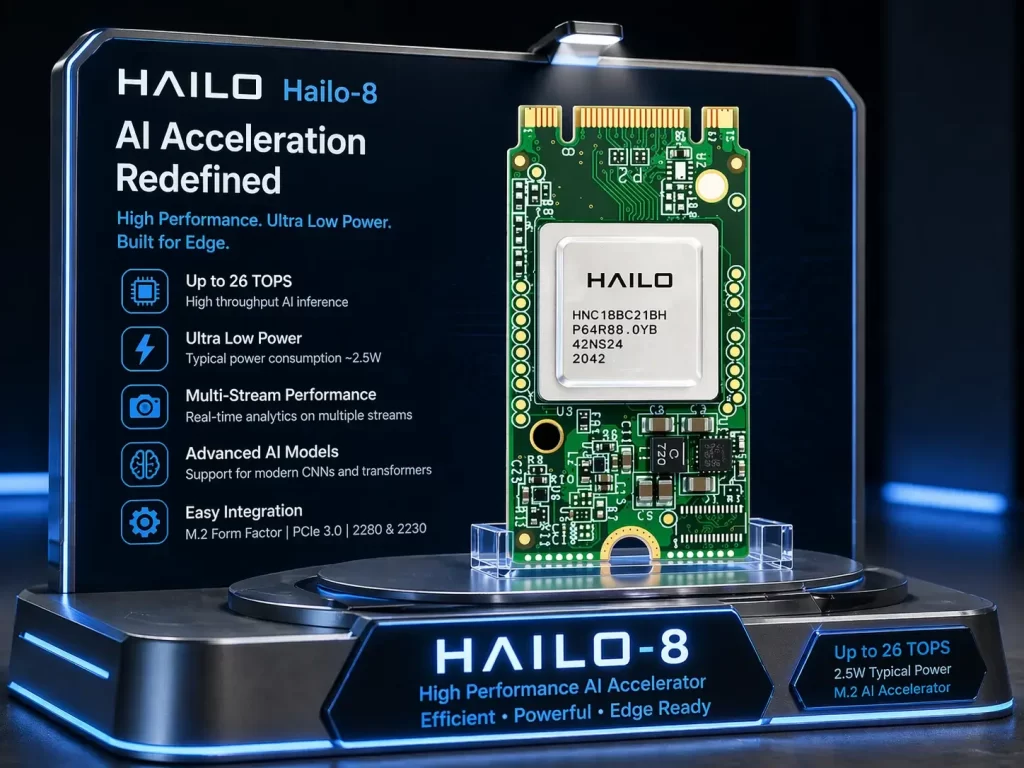

Hailo-8 AI Accelerator

Hailo-8 is a different kind of product. It is not a full SoC. It is a dedicated AI accelerator built mainly for inference. This matters because it focuses its hardware resources on neural network execution instead of trying to do everything at once. In real-time analytics, that can be a major advantage. Hailo-8 is known for strong performance per watt, which makes it attractive for multi-camera video analytics, smart city systems, and retail monitoring. If the goal is to process several streams at low latency without high power draw, Hailo-8 is a serious option. A more direct look at where it stands against other solutions is in the M.2 AI accelerator comparison of Hailo-8, DeepX and NVIDIA Jetson, which fits naturally into this discussion because these products often appear in the same edge AI conversations.

DeepX DX-M1 and DX-M1M

DeepX DX-M1 and DX-M1M show another direction in the market. Instead of relying only on integrated AI hardware inside the main chip, these modules bring acceleration through M.2. That sounds simple, but it solves a real problem. Edge AI workloads often grow over time. A system that starts with light inference may later need more models, more streams, or more demanding vision tasks. In that case, modular AI hardware becomes attractive. You do not always need to redesign the full board. You can scale the AI side more flexibly. For a closer look at how these two DeepX versions differ, the DeepX DX-M1M vs DX-M1 M.2 module comparison is worth connecting inside the article because it supports the exact topic of modular edge AI expansion.

NVIDIA Jetson Orin

NVIDIA Jetson Orin remains one of the most powerful edge AI platforms for real-time analytics when raw compute is the priority. It is widely used in robotics, autonomous systems, industrial inspection, and more advanced AI pipelines. The official NVIDIA Jetson Orin technical specifications page shows how this platform scales across different modules for demanding edge AI workloads. That power, however, comes at a cost. Jetson usually uses more power than dedicated NPUs or compact accelerators. So it is often the better choice when the workload is more complex and the device can tolerate higher energy use.

Google Edge TPU

Google Edge TPU belongs to the low-power side of the market. It is more limited than Jetson or some larger accelerators, but it is very useful for simple real-time inference in compact devices. If the task is narrow and efficient deployment matters more than flexibility, Edge TPU still deserves attention. It is especially relevant in small systems that do not need large multimodal models or high-resolution multi-stream analytics.

Intel Core Ultra

Intel Core Ultra chips might not be the first thing people think of when it comes to edge AI, but they still play an important role in real-world setups. Many businesses already use x86 hardware in their systems. For example, kiosks, retail computers, and industrial machines often need AI features but can’t switch to new types of hardware easily. In these cases, Intel’s mix of CPU, GPU, and NPU can be really helpful. It might not be the most efficient choice for every edge AI task, but it’s a good option when staying compatible with existing systems is important.

Qualcomm AI Solution

Qualcomm also deserves a place in the conversation. Its AI hardware is often associated with mobile and low-power systems, but that is exactly why it matters for edge AI. Always-on AI, low-power vision, audio processing, and compact smart devices are all areas where efficient Qualcomm platforms can be relevant.

Comparison of Edge AI Chips for Real-Time Analytics

The table below makes the main differences easier to see.

This comparison also shows why a single ranking is not enough. The best chip depends on what “best” means in the actual project. If power is limited, Hailo-8 or Edge TPU may be more attractive than Jetson. If flexibility and software ecosystem matter most, Jetson can be the stronger choice. If cost and full-system integration matter, an RK3588 single-board computer may be the more realistic option.

Why RK3588 Still Matters in This Market

Some people focus only on the highest TOPS numbers and overlook practical chips like RK3588. That would be a mistake. RK3588 remains relevant because many real products do not need extreme inference power. They need something stable, affordable, and capable enough to run vision models locally while also handling display output, storage, networking, and general system tasks.

That is where the RK3588 SBC market is strong. An RK3588 single-board computer can serve as a smart camera controller, an AI display platform, a local analytics terminal, or part of an industrial monitoring system. In many cases, that is exactly what edge AI buyers are looking for. They do not always need the most powerful accelerator. They need hardware that works reliably and is easy to deploy.

Why Modular AI Is Growing

Another clear trend is modular AI acceleration. M.2 modules are getting more attention because they make upgrades easier. AI workloads change fast. What is enough today may not be enough in a year. With modular acceleration, a system does not always need a full redesign to gain more inference power.

This is especially useful in industrial settings. A company may start with simple defect detection and later move to more cameras, more classes, or more advanced models. A modular path makes that growth easier to manage.

The Real Trade-Offs

The real choice in edge AI is rarely about one number. It is usually a trade-off between performance, power, flexibility, cost, and software maturity. Jetson offers strong capability, but uses more power. Hailo is efficient, but depends on a host system. RK3588 is not the fastest, but it gives a practical all-in-one base. DeepX is promising for modular deployments, but it depends on how the full system is designed around it.

That is why real-time analytics hardware should be chosen based on the job, not on hype. A factory vision system, a smart retail camera, and a mobile battery-powered device all have different limits. The right chip is the one that fits those limits while still delivering enough inference performance.

Conclusion

Edge AI for real-time analytics is no longer a niche topic. It is now part of real products in cameras, robotics, industry, retail, and smart devices. The market includes full SoCs like Rockchip RK3588, dedicated accelerators like Hailo-8 and Edge TPU, modular options like DeepX M.2 products, and high-performance platforms like NVIDIA Jetson Orin.

RK3588 remains a useful choice for many embedded systems because an RK3588 SBC or RK3588 single-board computer can combine general computing and local AI in one practical platform. Hailo-8 stands out for efficient inference. DeepX is relevant because modular AI is becoming more important. Jetson Orin is still a major option when the workload is heavier and power is less constrained.

The main point is simple. There is no universal winner. The best edge AI chip for real-time analytics depends on what the system needs to do, how much power it can use, and how much flexibility the developer wants for future growth.

FAQ

What is the best chip for edge AI real-time analytics?

There is no single best chip for every case. Jetson Orin is stronger for complex workloads, Hailo-8 is better for efficiency, and RK3588 is a practical choice for many embedded products.

Is RK3588 good for AI workloads?

Yes. It is especially useful in an RK3588 SBC or RK3588 single-board computer where moderate AI inference, video processing, and full system integration are all needed together.

Are M.2 AI modules better than integrated NPUs?

Not always. They are better when modular scaling matters. Integrated NPUs are simpler, but M.2 modules can make upgrades easier.

Why is Hailo-8 popular for real-time analytics?

Because it offers strong inference performance with very good power efficiency, especially for vision-heavy workloads.

Why do some projects still use Jetson even if it uses more power?

Because Jetson supports heavier models and a wider software stack, which matters in robotics and complex AI systems.